AONDevices: Listening without limits

Ultra-low-power edge AI processors and algorithms from AONDevices are set to banish the power limits holding back always-on sensors in wearables and IoT.

BY REBECCA POOL, TECHNOLOGY EDITOR

For years, always-on sensing has been held back by power budgets. Continuously monitoring surroundings for voice, sound and motion demands persistent processing that can quickly drain batteries, leaving designers treading a fine line between device responsiveness, feature sets and battery life. However, a raft of recent partnerships from California-based AONDevices, which has developed ultra-low-power edge AI processors and algorithms for always-on voice and sensor fusion applications, suggests these limitations are beginning to ease.

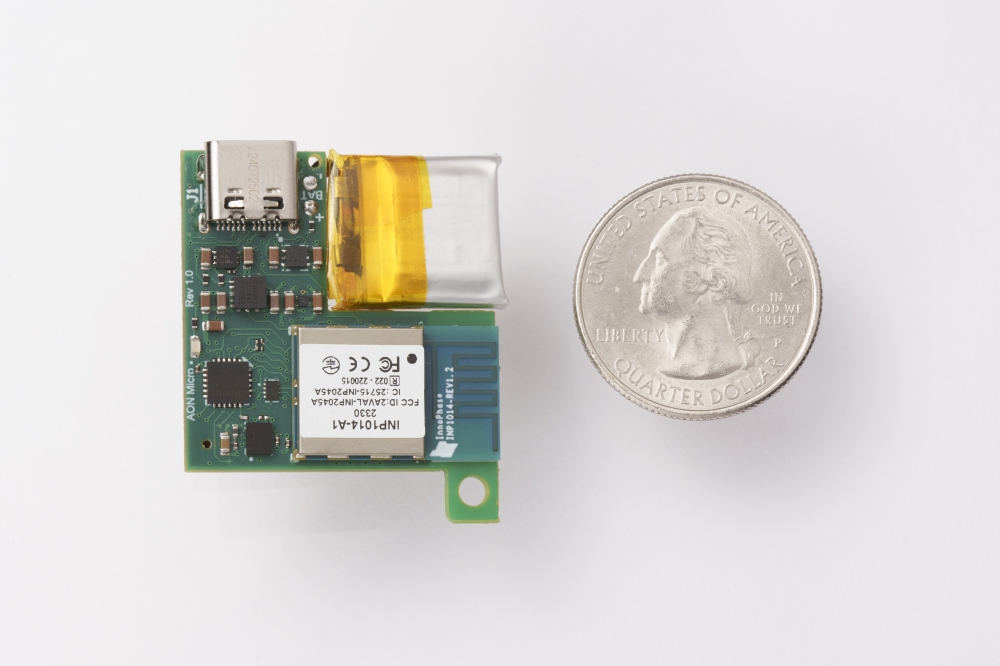

At this year's CES, AONDevices and three partners - P-logic, Realtek and TDK - demonstrated how ultra-low-power edge AI can enable devices to continuously listen, sense, and respond, without sacrificing battery life. AONDevices integrated one of its processors into an always-on sensor tag from edge-AI device designer P-Logic, reporting battery lifetimes of several years from a single coin cell. Edge AI was also integrated into a wearable safety device, demonstrating voice confirmation, acoustic event recognition and environmental hazard monitoring.

P-Logic’s Beacon AI Tag is a compact, always-on sensing device designed to add acoustic, motion, and environmental intelligence to existing systems.

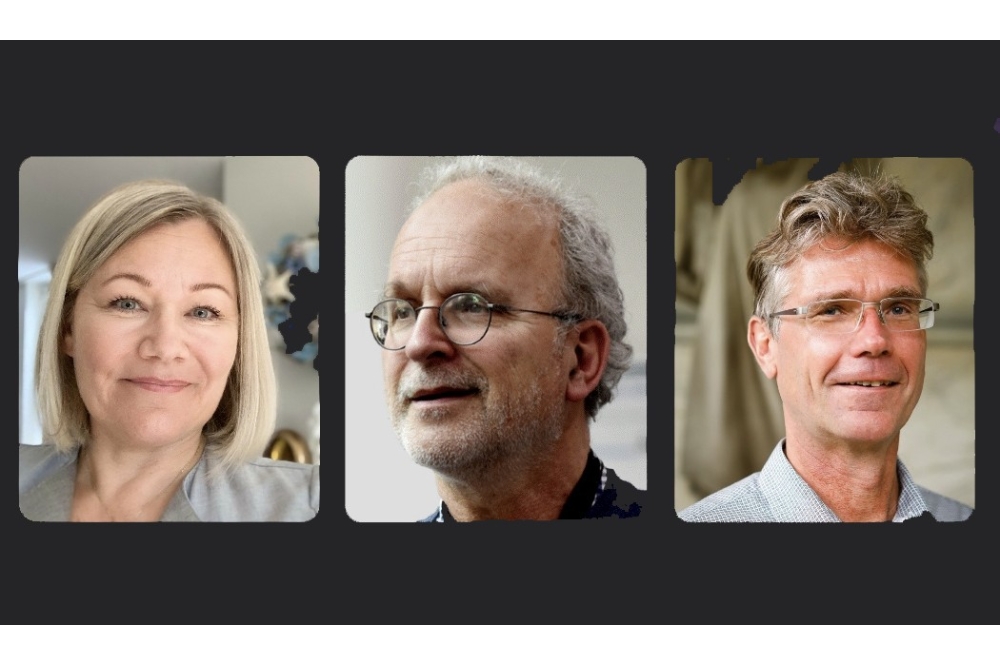

As Mouna Elkhatib, CEO and CTO of AONDevices, said: “Our focus is enabling meaningful intelligence under strict power limits. Running advanced machine learning features at super-low-power levels is what unlocks the next generation of smart wearables, tags, and safety devices. P-Logic’s products show how powerful these capabilities become when integrated into real designs.”

Mouna Elkhatib is CEO, CTO, and Co-Founder of AONDevices.

AONDevices also combined its processor with Realtek's Bluetooth Low Energy chipset and software stack to showcase always-on, context-aware, smart remote controls. The company also demonstrated how its edge AI processor, integrated to TDK InvenSense's digital microphone, could enable always-on voice and sound detection.

“This is where our super-low-power edge AI architecture shines,” said Elkhatib. “[Our processor] doesn’t just run AI at ultra-low power – it manages the entire sensing pipeline. By controlling the microphone and system flow at the hardware level, we eliminate the traditional trade-off between low power and high accuracy.”

Early days

Elkhatib co-founded AONDevices with Daniel Schoch and Adil Benyassine back in 2018 to provide low-power signal processing devices with integrated machine learning. With $2.6 million seed funds following over the next couple of years, all three founders were keen to develop robust, low-cost on-chip AI-based algorithms to give battery-powered devices always-on capability for listening and responding to voice and audio.

Elkhatib had already worked at Conexant, semiconductor provider of voice and audio processing chips. “I led most of the audio codecs in the industry here for laptops, PCs, smart speakers – all in the embedded space,” she told Power Electronics International. Along the way, she was also system lead and architect for the voice and audio chipsets for the Galaxy S7 and later generation smartphones at US semiconductor firm, Qualcomm, and was instrumental in designing low power neuromorphic voice activation systems at AI computing firm, BrainChip.

“I started AONDevices with a view to enabling always-on with as many features as possible,” she said. “From the beginning, our vision was to enable full sensor fusion in this [always-on] state, so not only voice and not only motion. We wanted to detect, for instance, an elderly person falling, a baby crying, someone calling for help at any time – use always-on [technology] to help human daily life.”

According to Elkhatib, processor development has been inspired by the human brain. “Think about it - we're always listening, our brains are always active in a lower power state, and wake up only when there is an interesting event to process,” she said. With this in mind, she and colleagues designed the hardware, algorithms - deep learning neural networks for audio applications - and software all together, tailoring their developments for ultra-low-power systems.

“We didn't want to just build a chip and worry about algorithms, we co-designed the machine learning algorithms with the chip, software and entire system in mind,” she said. “I don't believe in a generic machine learning chip that works for any AI workload, as that big chip will just [consume] too much power. Yet a tiny chip will not fit a programmer's machine learning models.”

“So now we are seeing industry moving towards co-designing the machine learning models with the hardware,” she added.

AI processors and more

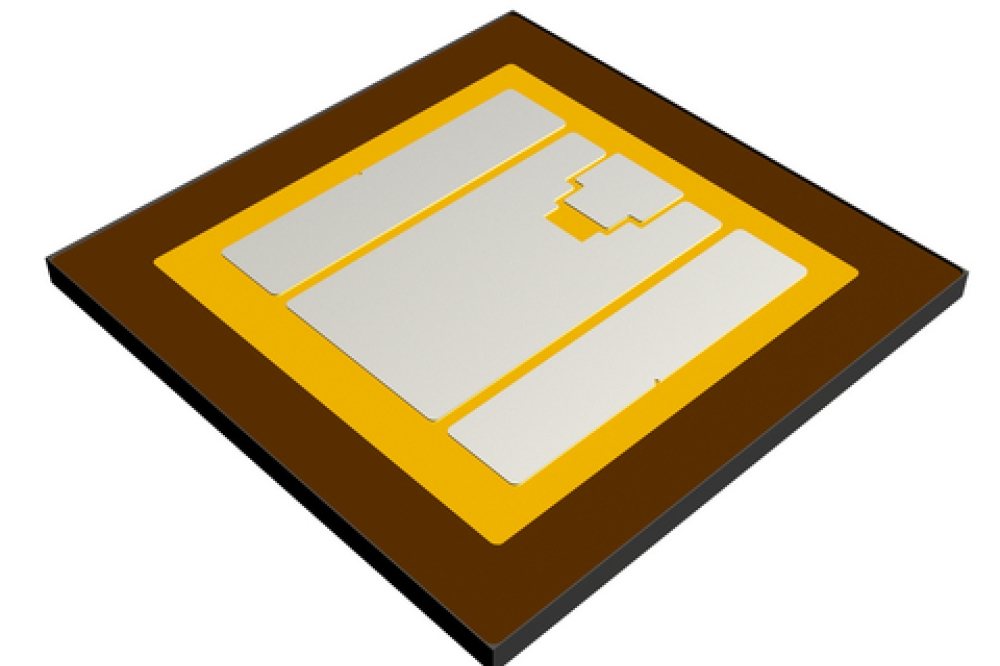

To date, the company has released two ultra-low-power AI processors, with a third processor under development. The existing processors integrate custom-neural network cores into 40nm ULP (Ultra-Low Power) silicon chips – silicon that is designed to minimize power consumption and leakage current for IoT, wearables and battery-powered applications. The processors contain dual neural processing units, a RISC-V core, and hardware DSPs tailored for on-device, always-on AI processing. Multi-sensor fusion supports myriad inputs, including microphones and accelerometers, so the processor can detect voice commands, gestures, and environmental sounds simultaneously.

“[In many applications] the focus has been on the voice, but we need to detect full motion; is a person running or sitting, are they snoring or laughing,” said Elkhatib. “We want to detect all of these emotions with speech, motion – with everything. That is the only way to really recognise the true state of a system – which in this case is the human body.”

Critically, each chip is designed to operate in the microwatt power range - consuming less than 260µW during full processing and less than 80µW in listening mode - enabling long battery life for IoT devices, wearables, and hearables. “When we present [our processors] to OEMs, we can say, for instance, this will support wake-word recognition, voice command, siren detection plus speaker identification - all of these features within the budget of a few hundred microwatts,” said Elkhatib.

Elkhatib and AONDevices colleagues have also developed an online tool suite for creating, training and deploying machine learning models for edge AI devices. The platform enables data collection via, say microphones or accelerometers, and synthetic data augmentation so training can take place on small datasets - while traditional AI training typically requires extensive data collection, the platform generates accurate models with as few as 100 samples. Building lightweight machine learning models for the ultra-low-power silicon is part of the platform, as is performance testing to ensure models perform in the real world.

Elkhatib highlighted how her company's micro-machine learning models learned parameters, or weights, are less than 50 kilobytes – incredibly small, and suitable for on-device inference. “The AONDevices platform is optimised to guarantee small ML models without compromising on accuracy,” she says. “So we end up with efficient deep learning for voice, sound, context, any type of pattern recognition, that is always running on super-low power.”

But what about privacy? Always-on devices have raised significant privacy concerns because they constantly monitor their environment to detect user activity - yet Elkhatib is adamant this will not be the case for AONDevices' technology. “Some features in, say, a smart watch use an app to transmit data to the cloud,” she said. “We don't want that and will reject those devices – and that's where our technology also comes into a big play.”

Reaching markets

So with the tech developed and demonstrated, Elkhatib and AONDevices colleagues have their sights firmly fixed on markets. Market forecasts are solid: latest figures from The Business Research Company, forecast the event-driven audio edge chip market to grow from today's $2.06 billion to $4.06 billion in 2030, corresponding to 18.6% CAGR. Meanwhile, for the global sensor fusion market, Roots Analysis predicts a 18.32% CAGR with the market reaching $55.69 billion by 2035. Fortune Business Insights forecasts the global wearable AI market to reach $359.32 billion by 2034 and a 24.7% CAGR over the forecast period.

AONDevices has worked with Taiwan-based ASIC design services and silicon IP provider, Faraday Technology, for several years, and in March 2025 announced plans to bolster production and ensure scalable manufacturing - positioning the company to capture a share of this growth with its AI processors. As the IC design partner of UMC (United Microelectronics Corporation), Faraday can offer priority access to advanced semiconductor manufacturing processes to help support reliable production amid fluctuating market conditions. "Faraday's experience and priority access to UMC's manufacturing capabilities provide us with the scalability and reliability necessary to meet the growing demand for our products," highlighted Elkhatib.

The latest partnerships with P-Logic, TDK InvenSense and Realtek are also clear signals that AONDevices is edging towards more and more commercial application. As Elkhatib put it: “Today we have wins in headsets and remote-control [devices], and we're moving into wearables – these are the low hanging fruits.”

But as the CEO points out, the technology can apply to any always-on device. Pointing to TVs and kitchen appliances, she highlights how these devices in standby should consume very little power to meet EU energy label standards. “Large system-on-chips cannot run continuously on low power but [if the device also has] a chip like ours, it can,” she said.